Knot Resolver library¶

Requirements¶

libknot 2.0 (Knot DNS high-performance DNS library.)

For users¶

The library as described provides basic services for name resolution, which should cover the usage, examples are in the resolve API documentation.

Tip

If you’re migrating from getaddrinfo(), see “synchronous” API, but the library offers iterative API as well to plug it into your event loop for example.

For developers¶

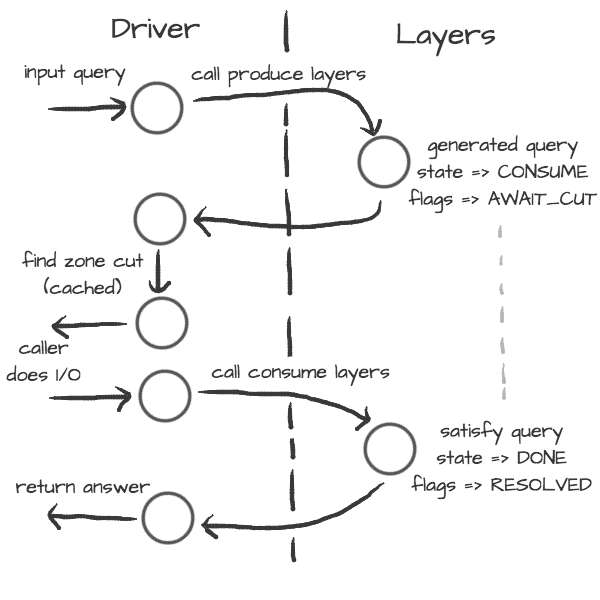

The resolution process starts with the functions in resolve.c, they are responsible for:

reacting to state machine state (i.e. calling consume layers if we have an answer ready)

interacting with the library user (i.e. asking caller for I/O, accepting queries)

fetching assets needed by layers (i.e. zone cut)

This is the driver. The driver is not meant to know “how” the query resolves, but rather “when” to execute “what”.

On the other side are layers. They are responsible for dissecting the packets and informing the driver about the results. For example, a produce layer generates query, a consume layer validates answer.

Tip

Layers are executed asynchronously by the driver. If you need some asset beforehand, you can signalize the driver using returning state or current query flags. For example, setting a flag AWAIT_CUT forces driver to fetch zone cut information before the packet is consumed; setting a RESOLVED flag makes it pop a query after the current set of layers is finished; returning FAIL state makes it fail current query.

Layers can also change course of resolution, for example by appending additional queries.

consume = function (state, req, answer)

if answer:qtype() == kres.type.NS then

local qry = req:push(answer:qname(), kres.type.SOA, kres.class.IN)

qry.flags.AWAIT_CUT = true

end

return state

end

This doesn’t block currently processed query, and the newly created sub-request will start as soon as driver finishes processing current. In some cases you might need to issue sub-request and process it before continuing with the current, i.e. validator may need a DNSKEY before it can validate signatures. In this case, layers can yield and resume afterwards.

consume = function (state, req, answer)

if state == kres.YIELD then

print('continuing yielded layer')

return kres.DONE

else

if answer:qtype() == kres.type.NS then

local qry = req:push(answer:qname(), kres.type.SOA, kres.class.IN)

qry.flags.AWAIT_CUT = true

print('planned SOA query, yielding')

return kres.YIELD

end

return state

end

end

The YIELD state is a bit special. When a layer returns it, it interrupts current walk through the layers. When the layer receives it,

it means that it yielded before and now it is resumed. This is useful in a situation where you need a sub-request to determine whether current answer is valid or not.

Writing layers¶

Warning

FIXME: this dev-docs section is outdated! Better see comments in files instead, for now.

The resolver library leverages the processing API from the libknot to separate packet processing code into layers.

Note

This is only crash-course in the library internals, see the resolver library documentation for the complete overview of the services.

The library offers following services:

Cache - MVCC cache interface for retrieving/storing resource records.

Resolution plan - Query resolution plan, a list of partial queries (with hierarchy) sent in order to satisfy original query. This contains information about the queries, nameserver choice, timing information, answer and its class.

Nameservers - Reputation database of nameservers, this serves as an aid for nameserver choice.

A processing layer is going to be called by the query resolution driver for each query, so you’re going to work with struct kr_request as your per-query context. This structure contains pointers to resolution context, resolution plan and also the final answer.

int consume(kr_layer_t *ctx, knot_pkt_t *pkt)

{

struct kr_request *req = ctx->req;

struct kr_query *qry = req->current_query;

}

This is only passive processing of the incoming answer. If you want to change the course of resolution, say satisfy a query from a local cache before the library issues a query to the nameserver, you can use states (see the Static hints for example).

int produce(kr_layer_t *ctx, knot_pkt_t *pkt)

{

struct kr_request *req = ctx->req;

struct kr_query *qry = req->current_query;

/* Query can be satisfied locally. */

if (can_satisfy(qry)) {

/* This flag makes the resolver move the query

* to the "resolved" list. */

qry->flags.RESOLVED = true;

return KR_STATE_DONE;

}

/* Pass-through. */

return ctx->state;

}

It is possible to not only act during the query resolution, but also to view the complete resolution plan afterwards. This is useful for analysis-type tasks, or “per answer” hooks.

int finish(kr_layer_t *ctx)

{

struct kr_request *req = ctx->req;

struct kr_rplan *rplan = req->rplan;

/* Print the query sequence with start time. */

char qname_str[KNOT_DNAME_MAXLEN];

struct kr_query *qry = NULL

WALK_LIST(qry, rplan->resolved) {

knot_dname_to_str(qname_str, qry->sname, sizeof(qname_str));

printf("%s at %u\n", qname_str, qry->timestamp);

}

return ctx->state;

}

APIs in Lua¶

The APIs in Lua world try to mirror the C APIs using LuaJIT FFI, with several differences and enhancements. There is not comprehensive guide on the API yet, but you can have a look at the bindings file.

Elementary types and constants¶

States are directly in

krestable, e.g.kres.YIELD, kres.CONSUME, kres.PRODUCE, kres.DONE, kres.FAIL.DNS classes are in

kres.classtable, e.g.kres.class.INfor Internet class.DNS types are in

kres.typetable, e.g.kres.type.AAAAfor AAAA type.DNS rcodes types are in

kres.rcodetable, e.g.kres.rcode.NOERROR.Extended DNS error codes are in

kres.extended_errortable, e.g.kres.extended_error.BLOCKED.Packet sections (QUESTION, ANSWER, AUTHORITY, ADDITIONAL) are in the

kres.sectiontable.

Working with domain names¶

The internal API usually works with domain names in label format, you can convert between text and wire freely.

local dname = kres.str2dname('business.se')

local strname = kres.dname2str(dname)

Working with resource records¶

Resource records are stored as tables.

local rr = { owner = kres.str2dname('owner'),

ttl = 0,

class = kres.class.IN,

type = kres.type.CNAME,

rdata = kres.str2dname('someplace') }

print(kres.rr2str(rr))

RRSets in packet can be accessed using FFI, you can easily fetch single records.

local rrset = { ... }

local rr = rrset:get(0) -- Return first RR

print(kres.dname2str(rr:owner()))

print(rr:ttl())

print(kres.rr2str(rr))

Working with packets¶

Packet is the data structure that you’re going to see in layers very often. They consists of a header, and four sections: QUESTION, ANSWER, AUTHORITY, ADDITIONAL. The first section is special, as it contains the query name, type, and class; the rest of the sections contain RRSets.

First you need to convert it to a type known to FFI and check basic properties. Let’s start with a snippet of a consume layer.

consume = function (state, req, pkt)

print('rcode:', pkt:rcode())

print('query:', kres.dname2str(pkt:qname()), pkt:qclass(), pkt:qtype())

if pkt:rcode() ~= kres.rcode.NOERROR then

print('error response')

end

end

You can enumerate records in the sections.

local records = pkt:section(kres.section.ANSWER)

for i = 1, #records do

local rr = records[i]

if rr.type == kres.type.AAAA then

print(kres.rr2str(rr))

end

end

During produce or begin, you might want to want to write to packet. Keep in mind that you have to write packet sections in sequence, e.g. you can’t write to ANSWER after writing AUTHORITY, it’s like stages where you can’t go back.

pkt:rcode(kres.rcode.NXDOMAIN)

-- Clear answer and write QUESTION

pkt:recycle()

pkt:question('\7blocked', kres.class.IN, kres.type.SOA)

-- Start writing data

pkt:begin(kres.section.ANSWER)

-- Nothing in answer

pkt:begin(kres.section.AUTHORITY)

local soa = { owner = '\7blocked', ttl = 900, class = kres.class.IN, type = kres.type.SOA, rdata = '...' }

pkt:put(soa.owner, soa.ttl, soa.class, soa.type, soa.rdata)

Working with requests¶

The request holds information about currently processed query, enabled options, cache, and other extra data. You primarily need to retrieve currently processed query.

consume = function (state, req, pkt)

print(req.options)

print(req.state)

-- Print information about current query

local current = req:current()

print(kres.dname2str(current.owner))

print(current.stype, current.sclass, current.id, current.flags)

end

In layers that either begin or finalize, you can walk the list of resolved queries.

local last = req:resolved()

print(last.stype)

As described in the layers, you can not only retrieve information about current query, but also push new ones or pop old ones.

-- Push new query

local qry = req:push(pkt:qname(), kres.type.SOA, kres.class.IN)

qry.flags.AWAIT_CUT = true

-- Pop the query, this will erase it from resolution plan

req:pop(qry)

Significant Lua API changes¶

Incompatible changes since 3.0.0¶

In the main kres.* lua binding, there was only change in struct knot_rrset_t:

constructor now accepts TTL as additional parameter (defaulting to zero)

add_rdata() doesn’t accept TTL anymore (and will throw an error if passed)

In case you used knot_* functions and structures bound to lua:

knot_dname_is_sub(a, b): knot_dname_in_bailiwick(a, b) > 0

knot_rdata_rdlen(): knot_rdataset_at().len

knot_rdata_data(): knot_rdataset_at().data

knot_rdata_array_size(): offsetof(struct knot_data_t, data) + knot_rdataset_at().len

struct knot_rdataset: field names were renamed to .count and .rdata

some functions got inlined from headers, but you can use their kr_* clones: kr_rrsig_sig_inception(), kr_rrsig_sig_expiration(), kr_rrsig_type_covered(). Note that these functions now accept knot_rdata_t* instead of a pair knot_rdataset_t* and size_t - you can use knot_rdataset_at() for that.

knot_rrset_add_rdata() doesn’t take TTL parameter anymore

knot_rrset_init_empty() was inlined, but in lua you can use the constructor

knot_rrset_ttl() was inlined, but in lua you can use :ttl() method instead

knot_pkt_qname(), _qtype(), _qclass(), _rr(), _section() were inlined, but in lua you can use methods instead, e.g. myPacket:qname()

knot_pkt_free() takes knot_pkt_t* instead of knot_pkt_t**, but from lua you probably didn’t want to use that; constructor ensures garbage collection.

API reference¶

Warning

This section is generated with doxygen and breathe. Due to their limitations, some symbols may be incorrectly described or missing entirely. For exhaustive and accurate reference, refer to the header files instead.

Name resolution¶

The API provides an API providing a “consumer-producer”-like interface to enable user to plug it into existing event loop or I/O code.

Example usage of the iterative API:¶

// Create request and its memory pool

struct kr_request req = {

.pool = {

.ctx = mp_new (4096),

.alloc = (mm_alloc_t) mp_alloc

}

};

// Setup and provide input query

int state = kr_resolve_begin(&req, ctx);

state = kr_resolve_consume(&req, query);

// Generate answer

while (state == KR_STATE_PRODUCE) {

// Additional query generate, do the I/O and pass back answer

state = kr_resolve_produce(&req, &addr, &type, query);

while (state == KR_STATE_CONSUME) {

int ret = sendrecv(addr, proto, query, resp);

// If I/O fails, make "resp" empty

state = kr_resolve_consume(&request, addr, resp);

knot_pkt_clear(resp);

}

knot_pkt_clear(query);

}

// "state" is either DONE or FAIL

kr_resolve_finish(&request, state);

Defines

-

kr_request_selected(req)¶

Initializer for an array of *_selected.

Typedefs

-

typedef uint8_t *(*alloc_wire_f)(struct kr_request *req, uint16_t *maxlen)¶

Allocate buffer for answer’s wire (*maxlen may get lowered).

Motivation: XDP wire allocation is an overlap of library and daemon:

it needs to be called from the library

it needs to rely on some daemon’s internals

the library (currently) isn’t allowed to directly use symbols from daemon (contrary to modules), e.g. some of our lib-using tests run without daemon

Note: after we obtain the wire, we’re obliged to send it out. (So far there’s no use case to allow cancelling at that point.)

-

typedef bool (*addr_info_f)(struct sockaddr*)¶

-

typedef void (*async_resolution_f)(knot_dname_t*, enum knot_rr_type)¶

-

typedef see_source_code kr_sockaddr_array_t¶

Enums

-

enum kr_rank¶

RRset rank - for cache and ranked_rr_*.

The rank meaning consists of one independent flag - KR_RANK_AUTH, and the rest have meaning of values where only one can hold at any time. You can use one of the enums as a safe initial value, optionally | KR_RANK_AUTH; otherwise it’s best to manipulate ranks via the kr_rank_* functions.

See also: https://tools.ietf.org/html/rfc2181#section-5.4.1 https://tools.ietf.org/html/rfc4035#section-4.3

Note

The representation is complicated by restrictions on integer comparison:

AUTH must be > than !AUTH

AUTH INSECURE must be > than AUTH (because it attempted validation)

!AUTH SECURE must be > than AUTH (because it’s valid)

Values:

-

enumerator KR_RANK_INITIAL¶

Did not attempt to validate.

It’s assumed compulsory to validate (or prove insecure).

-

enumerator KR_RANK_OMIT¶

Do not attempt to validate.

(And don’t consider it a validation failure.)

-

enumerator KR_RANK_TRY¶

Attempt to validate, but failures are non-fatal.

-

enumerator KR_RANK_INDET¶

Unable to determine whether it should be secure.

-

enumerator KR_RANK_BOGUS¶

Ought to be secure but isn’t.

-

enumerator KR_RANK_MISMATCH¶

-

enumerator KR_RANK_MISSING¶

No RRSIG found for that owner+type combination.

-

enumerator KR_RANK_INSECURE¶

Proven to be insecure, i.e.

we have a chain of trust from TAs that cryptographically denies the possibility of existence of a positive chain of trust from the TAs to the record. Or it may be covered by a closer negative TA.

-

enumerator KR_RANK_AUTH¶

Authoritative data flag; the chain of authority was “verified”.

Even if not set, only in-bailiwick stuff is acceptable, i.e. almost authoritative (example: mandatory glue and its NS RR).

-

enumerator KR_RANK_SECURE¶

Verified whole chain of trust from the closest TA.

Functions

-

bool kr_rank_check(uint8_t rank)¶

Check that a rank value is valid.

Meant for assertions.

-

bool kr_rank_test(uint8_t rank, uint8_t kr_flag)¶

Test the presence of any flag/state in a rank, i.e.

including KR_RANK_AUTH.

-

static inline void kr_rank_set(uint8_t *rank, uint8_t kr_flag)¶

Set the rank state.

The _AUTH flag is kept as it was.

-

int kr_resolve_begin(struct kr_request *request, struct kr_context *ctx)¶

Begin name resolution.

Note

Expects a request to have an initialized mempool.

- Parameters:

request – request state with initialized mempool

ctx – resolution context

- Returns:

CONSUME (expecting query)

-

knot_rrset_t *kr_request_ensure_edns(struct kr_request *request)¶

Ensure that request->answer->opt_rr is present if query has EDNS.

This function should be used after clearing a response packet to ensure its opt_rr is properly set. Returns the opt_rr (for convenience) or NULL.

-

knot_pkt_t *kr_request_ensure_answer(struct kr_request *request)¶

Ensure that request->answer is usable, and return it (for convenience).

It may return NULL, in which case it marks ->state with _FAIL and no answer will be sent. Only use this when it’s guaranteed that there will be no delay before sending it. You don’t need to call this in places where “resolver knows” that there will be no delay, but even there you need to check if the ->answer is NULL (unless you check for _FAIL anyway).

-

int kr_resolve_consume(struct kr_request *request, struct kr_transport **transport, knot_pkt_t *packet)¶

Consume input packet (may be either first query or answer to query originated from kr_resolve_produce())

Note

If the I/O fails, provide an empty or NULL packet, this will make iterator recognize nameserver failure.

- Parameters:

request – request state (awaiting input)

src – [in] packet source address

packet – [in] input packet

- Returns:

any state

-

int kr_resolve_produce(struct kr_request *request, struct kr_transport **transport, knot_pkt_t *packet)¶

Produce either next additional query or finish.

If the CONSUME is returned then dst, type and packet will be filled with appropriate values and caller is responsible to send them and receive answer. If it returns any other state, then content of the variables is undefined.

- Parameters:

request – request state (in PRODUCE state)

dst – [out] possible address of the next nameserver

type – [out] possible used socket type (SOCK_STREAM, SOCK_DGRAM)

packet – [out] packet to be filled with additional query

- Returns:

any state

-

int kr_resolve_checkout(struct kr_request *request, const struct sockaddr *src, struct kr_transport *transport, knot_pkt_t *packet)¶

Finalises the outbound query packet with the knowledge of the IP addresses.

Note

The function must be called before actual sending of the request packet.

- Parameters:

request – request state (in PRODUCE state)

src – address from which the query is going to be sent

dst – address of the name server

type – used socket type (SOCK_STREAM, SOCK_DGRAM)

packet – [in,out] query packet to be finalised

- Returns:

kr_ok() or error code

-

int kr_resolve_finish(struct kr_request *request, int state)¶

Finish resolution and commit results if the state is DONE.

Note

The structures will be deinitialized, but the assigned memory pool is not going to be destroyed, as it’s owned by caller.

- Parameters:

request – request state

state – either DONE or FAIL state (to be assigned to request->state)

- Returns:

DONE

-

struct kr_rplan *kr_resolve_plan(struct kr_request *request)¶

Return resolution plan.

- Parameters:

request – request state

- Returns:

pointer to rplan

-

knot_mm_t *kr_resolve_pool(struct kr_request *request)¶

Return memory pool associated with request.

- Parameters:

request – request state

- Returns:

mempool

-

int kr_request_set_extended_error(struct kr_request *request, int info_code, const char *extra_text)¶

Set the extended DNS error for request.

The error is set only if it has a higher or the same priority as the one already assigned. The provided extra_text may be NULL, or a string that is allocated either statically, or on the request’s mempool. To clear any error, call it with KNOT_EDNS_EDE_NONE and NULL as extra_text.

To facilitate debugging, we include a unique base32 identifier at the start of the extra_text field for every call of this function. To generate such an identifier, you can use the command: $ base32 /dev/random | head -c 4

- Parameters:

request – request state

info_code – extended DNS error code

extra_text – optional string with additional information

- Returns:

info_code that is set after the call

-

static inline void kr_query_inform_timeout(struct kr_request *req, const struct kr_query *qry)¶

-

struct kr_context¶

- #include <resolve.h>

Name resolution context.

Resolution context provides basic services like cache, configuration and options.

Note

This structure is persistent between name resolutions and may be shared between threads.

Public Members

-

struct kr_qflags options¶

Default kr_request flags.

For startup defaults see init_resolver()

-

knot_rrset_t *downstream_opt_rr¶

Default EDNS towards both clients and upstream.

LATER: consider splitting the two, e.g. allow separately configured limits for UDP packet size (say, LAN is under control).

-

knot_rrset_t *upstream_opt_rr¶

-

struct kr_zonecut root_hints¶

-

unsigned cache_rtt_tout_retry_interval¶

-

module_array_t *modules¶

-

struct kr_cookie_ctx cookie_ctx¶

-

kr_cookie_lru_t *cache_cookie¶

-

int32_t tls_padding¶

See net.tls_padding in ../daemon/README.rst — -1 is “true” (default policy), 0 is “false” (no padding)

-

knot_mm_t *pool¶

-

struct kr_qflags options¶

-

struct kr_request_qsource_flags¶

Public Members

-

bool tcp¶

true if the request is not on UDP; only meaningful if (dst_addr).

-

bool tls¶

true if the request is encrypted; only meaningful if (dst_addr).

-

bool http¶

true if the request is on HTTP; only meaningful if (dst_addr).

-

bool xdp¶

true if the request is on AF_XDP; only meaningful if (dst_addr).

-

bool tcp¶

-

struct kr_extended_error¶

-

struct kr_request¶

- #include <resolve.h>

Name resolution request.

Keeps information about current query processing between calls to processing APIs, i.e. current resolved query, resolution plan, … Use this instead of the simple interface if you want to implement multiplexing or custom I/O.

Note

All data for this request must be allocated from the given pool.

Public Members

-

struct kr_context *ctx¶

-

knot_pkt_t *answer¶

-

const struct sockaddr *addr¶

Address that originated the request.

May be that of a client behind a proxy, if PROXYv2 is used. Otherwise, it will be the same as

comm_addr.NULLfor internal origin.

-

const struct sockaddr *comm_addr¶

Address that communicated the request.

This may be the address of a proxy. It is the same as

addrif no proxy is used.NULLfor internal origin.

-

const struct sockaddr *dst_addr¶

Address that accepted the request.

NULLfor internal origin. Beware: in case of UDP on wildcard address it will be wildcard; closely related: issue #173.

-

const knot_pkt_t *packet¶

-

struct kr_request_qsource_flags flags¶

Request flags from the point of view of the original client.

This client may be behind a proxy.

-

struct kr_request_qsource_flags comm_flags¶

Request flags from the point of view of the client actually communicating with the resolver.

When PROXYv2 protocol is used, this describes the request from the proxy. When there is no proxy, this will have exactly the same value as

flags.

-

size_t size¶

query packet size

-

int32_t stream_id¶

HTTP/2 stream ID for DoH requests.

-

kr_http_header_array_t headers¶

HTTP/2 headers for DoH requests.

-

struct kr_request.[anonymous] qsource¶

-

unsigned rtt¶

Current upstream RTT.

-

const struct kr_transport *transport¶

Current upstream transport.

-

struct kr_request.[anonymous] upstream¶

Upstream information, valid only in consume() phase.

-

int state¶

-

ranked_rr_array_t answ_selected¶

-

ranked_rr_array_t auth_selected¶

-

ranked_rr_array_t add_selected¶

-

bool answ_validated¶

internal to validator; beware of caching, etc.

-

bool auth_validated¶

see answ_validated ^^ ; TODO

-

uint8_t rank¶

Overall rank for the request.

Values from kr_rank, currently just KR_RANK_SECURE and _INITIAL. Only read this in finish phase and after validator, please. Meaning of _SECURE: all RRs in answer+authority are _SECURE, including any negative results implied (NXDOMAIN, NODATA).

-

trace_log_f trace_log¶

Logging tracepoint.

-

trace_callback_f trace_finish¶

Request finish tracepoint.

-

int vars_ref¶

Reference to per-request variable table.

LUA_NOREF if not set.

-

knot_mm_t pool¶

-

unsigned int uid¶

for logging purposes only

-

addr_info_f is_tls_capable¶

-

addr_info_f is_tcp_connected¶

-

addr_info_f is_tcp_waiting¶

-

kr_sockaddr_array_t forwarding_targets¶

When forwarding, possible targets are put here.

-

struct kr_request.[anonymous] selection_context¶

-

unsigned int count_no_nsaddr¶

-

unsigned int count_fail_row¶

-

alloc_wire_f alloc_wire_cb¶

CB to allocate answer wire (can be NULL).

-

struct kr_extended_error extended_error¶

EDE info; don’t modify directly, use kr_request_set_extended_error()

-

struct kr_context *ctx¶

Typedefs

Functions

-

void kr_qflags_set(struct kr_qflags *fl1, struct kr_qflags fl2)¶

Combine flags together.

This means set union for simple flags.

-

void kr_qflags_clear(struct kr_qflags *fl1, struct kr_qflags fl2)¶

Remove flags.

This means set-theoretic difference.

-

int kr_rplan_init(struct kr_rplan *rplan, struct kr_request *request, knot_mm_t *pool)¶

Initialize resolution plan (empty).

- Parameters:

rplan – plan instance

request – resolution request

pool – ephemeral memory pool for whole resolution

-

void kr_rplan_deinit(struct kr_rplan *rplan)¶

Deinitialize resolution plan, aborting any uncommitted transactions.

- Parameters:

rplan – plan instance

-

bool kr_rplan_empty(struct kr_rplan *rplan)¶

Return true if the resolution plan is empty (i.e.

finished or initialized)

- Parameters:

rplan – plan instance

- Returns:

true or false

-

struct kr_query *kr_rplan_push_empty(struct kr_rplan *rplan, struct kr_query *parent)¶

Push empty query to the top of the resolution plan.

Note

This query serves as a cookie query only.

- Parameters:

rplan – plan instance

parent – query parent (or NULL)

- Returns:

query instance or NULL

-

struct kr_query *kr_rplan_push(struct kr_rplan *rplan, struct kr_query *parent, const knot_dname_t *name, uint16_t cls, uint16_t type)¶

Push a query to the top of the resolution plan.

Note

This means that this query takes precedence before all pending queries.

- Parameters:

rplan – plan instance

parent – query parent (or NULL)

name – resolved name

cls – resolved class

type – resolved type

- Returns:

query instance or NULL

-

int kr_rplan_pop(struct kr_rplan *rplan, struct kr_query *qry)¶

Pop existing query from the resolution plan.

Note

Popped queries are not discarded, but moved to the resolved list.

- Parameters:

rplan – plan instance

qry – resolved query

- Returns:

0 or an error

-

bool kr_rplan_satisfies(struct kr_query *closure, const knot_dname_t *name, uint16_t cls, uint16_t type)¶

Return true if resolution chain satisfies given query.

-

struct kr_query *kr_rplan_last(struct kr_rplan *rplan)¶

Return last query (either currently being solved or last resolved).

This is necessary to retrieve the last query in case of resolution failures (e.g. time limit reached).

-

struct kr_query *kr_rplan_find_resolved(struct kr_rplan *rplan, struct kr_query *parent, const knot_dname_t *name, uint16_t cls, uint16_t type)¶

Check if a given query already resolved.

- Parameters:

rplan – plan instance

parent – query parent (or NULL)

name – resolved name

cls – resolved class

type – resolved type

- Returns:

query instance or NULL

-

struct kr_qflags¶

- #include <rplan.h>

Query flags.

Public Members

-

bool NO_MINIMIZE¶

Don’t minimize QNAME.

-

bool NO_IPV6¶

Disable IPv6.

-

bool NO_IPV4¶

Disable IPv4.

-

bool TCP¶

Use TCP (or TLS) for this query.

-

bool NO_ANSWER¶

Do not send any answer to the client.

Request state should be set to

KR_STATE_FAILwhen this flag is set.

-

bool RESOLVED¶

Query is resolved.

Note that kr_query gets RESOLVED before following a CNAME chain; see .CNAME.

-

bool AWAIT_IPV4¶

Query is waiting for A address.

-

bool AWAIT_IPV6¶

Query is waiting for AAAA address.

-

bool AWAIT_CUT¶

Query is waiting for zone cut lookup.

-

bool NO_EDNS¶

Don’t use EDNS.

-

bool CACHED¶

Query response is cached.

-

bool NO_CACHE¶

No cache for lookup; exception: finding NSs and subqueries.

-

bool EXPIRING¶

Query response is cached but expiring.

See is_expiring().

-

bool ALLOW_LOCAL¶

Allow queries to local or private address ranges.

-

bool DNSSEC_WANT¶

Want DNSSEC secured answer; exception: +cd, i.e.

knot_wire_get_cd(request->qsource.packet->wire)

-

bool DNSSEC_BOGUS¶

Query response is DNSSEC bogus.

-

bool DNSSEC_INSECURE¶

Query response is DNSSEC insecure.

-

bool DNSSEC_CD¶

Instruction to set CD bit in request.

-

bool STUB¶

Stub resolution, accept received answer as solved.

-

bool ALWAYS_CUT¶

Always recover zone cut (even if cached).

-

bool DNSSEC_WEXPAND¶

Query response has wildcard expansion.

-

bool PERMISSIVE¶

Permissive resolver mode.

-

bool STRICT¶

Strict resolver mode.

-

bool BADCOOKIE_AGAIN¶

Query again because bad cookie returned.

-

bool CNAME¶

Query response contains CNAME in answer section.

-

bool REORDER_RR¶

Reorder cached RRs.

-

bool TRACE¶

Also log answers on debug level.

-

bool NO_0X20¶

Disable query case randomization .

-

bool DNSSEC_NODS¶

DS non-existence is proven.

-

bool DNSSEC_OPTOUT¶

Closest encloser proof has optout.

-

bool NONAUTH¶

Non-authoritative in-bailiwick records are enough.

TODO: utilize this also outside cache.

-

bool FORWARD¶

Forward all queries to upstream; validate answers.

-

bool DNS64_MARK¶

Internal mark for dns64 module.

-

bool CACHE_TRIED¶

Internal to cache module.

-

bool NO_NS_FOUND¶

No valid NS found during last PRODUCE stage.

-

bool PKT_IS_SANE¶

Set by iterator in consume phase to indicate whether some basic aspects of the packet are OK, e.g.

QNAME.

-

bool DNS64_DISABLE¶

Don’t do any DNS64 stuff (meant for view:addr).

-

bool NO_MINIMIZE¶

-

struct kr_query¶

- #include <rplan.h>

Single query representation.

Public Members

-

knot_dname_t *sname¶

The name to resolve - lower-cased, uncompressed.

-

uint16_t stype¶

-

uint16_t sclass¶

-

uint16_t id¶

-

uint16_t reorder¶

Seed to reorder (cached) RRs in answer or zero.

-

uint32_t secret¶

-

uint64_t creation_time_mono¶

-

uint64_t timestamp_mono¶

Time of query created or time of query to upstream resolver (milliseconds).

-

struct timeval timestamp¶

Real time for TTL+DNSSEC checks (.tv_sec only).

-

struct kr_zonecut zone_cut¶

-

struct kr_layer_pickle *deferred¶

-

int8_t cname_depth¶

Current xNAME depth, set by iterator.

0 = uninitialized, 1 = no CNAME, … See also KR_CNAME_CHAIN_LIMIT.

-

struct kr_query *cname_parent¶

Pointer to the query that originated this one because of following a CNAME (or NULL).

-

struct kr_request *request¶

Parent resolution request.

-

kr_stale_cb stale_cb¶

See the type.

-

struct kr_server_selection server_selection¶

-

knot_dname_t *sname¶

-

struct kr_rplan¶

- #include <rplan.h>

Query resolution plan structure.

The structure most importantly holds the original query, answer and the list of pending queries required to resolve the original query. It also keeps a notion of current zone cut.

Public Members

-

kr_qarray_t pending¶

List of pending queries.

Beware: order is significant ATM, as the last is the next one to solve, and they may be inter-dependent.

-

kr_qarray_t resolved¶

List of resolved queries.

-

struct kr_request *request¶

Parent resolution request.

-

knot_mm_t *pool¶

Temporary memory pool.

-

uint32_t next_uid¶

Next value for kr_query::uid (incremental).

-

kr_qarray_t pending¶

Cache¶

Defines

-

TTL_MAX_MAX¶

Functions

-

int cache_peek(kr_layer_t *ctx, knot_pkt_t *pkt)¶

-

int cache_stash(kr_layer_t *ctx, knot_pkt_t *pkt)¶

-

int kr_cache_open(struct kr_cache *cache, const struct kr_cdb_api *api, struct kr_cdb_opts *opts, knot_mm_t *mm)¶

Open/create cache with provided storage options.

- Parameters:

cache – cache structure to be initialized

api – storage engine API

opts – storage-specific options (may be NULL for default)

mm – memory context.

- Returns:

0 or an error code

-

void kr_cache_close(struct kr_cache *cache)¶

Close persistent cache.

Note

This doesn’t clear the data, just closes the connection to the database.

- Parameters:

cache – structure

-

int kr_cache_commit(struct kr_cache *cache)¶

Run after a row of operations to release transaction/lock if needed.

-

static inline bool kr_cache_is_open(struct kr_cache *cache)¶

Return true if cache is open and enabled.

-

static inline void kr_cache_make_checkpoint(struct kr_cache *cache)¶

(Re)set the time pair to the current values.

-

int kr_cache_insert_rr(struct kr_cache *cache, const knot_rrset_t *rr, const knot_rrset_t *rrsig, uint8_t rank, uint32_t timestamp, bool ins_nsec_p)¶

Insert RRSet into cache, replacing any existing data.

- Parameters:

cache – cache structure

rr – inserted RRSet

rrsig – RRSIG for inserted RRSet (optional)

rank – rank of the data

timestamp – current time (as-if; if the RR are older, their timestamp is appropriate)

ins_nsec_p – update NSEC* parameters if applicable

- Returns:

0 or an errcode

-

int kr_cache_clear(struct kr_cache *cache)¶

Clear all items from the cache.

- Parameters:

cache – cache structure

- Returns:

if nonzero is returned, there’s a big problem - you probably want to abort(), perhaps except for kr_error(EAGAIN) which probably indicates transient errors.

-

int kr_cache_peek_exact(struct kr_cache *cache, const knot_dname_t *name, uint16_t type, struct kr_cache_p *peek)¶

-

int32_t kr_cache_ttl(const struct kr_cache_p *peek, const struct kr_query *qry, const knot_dname_t *name, uint16_t type)¶

-

int kr_cache_materialize(knot_rdataset_t *dst, const struct kr_cache_p *ref, knot_mm_t *pool)¶

-

int kr_cache_remove(struct kr_cache *cache, const knot_dname_t *name, uint16_t type)¶

Remove an entry from cache.

Note

only “exact hits” are considered ATM, and some other information may be removed alongside.

- Parameters:

cache – cache structure

name – dname

type – rr type

- Returns:

number of deleted records, or negative error code

-

int kr_cache_match(struct kr_cache *cache, const knot_dname_t *name, bool exact_name, knot_db_val_t keyval[][2], int maxcount)¶

Get keys matching a dname lf prefix.

Note

the cache keys are matched by prefix, i.e. it very much depends on their structure; CACHE_KEY_DEF.

- Parameters:

cache – cache structure

name – dname

exact_name – whether to only consider exact name matches

keyval – matched key-value pairs

maxcount – limit on the number of returned key-value pairs

- Returns:

result count or an errcode

-

int kr_cache_remove_subtree(struct kr_cache *cache, const knot_dname_t *name, bool exact_name, int maxcount)¶

Remove a subtree in cache.

It’s like _match but removing them instead of returning.

- Returns:

number of deleted entries or an errcode

-

int kr_cache_closest_apex(struct kr_cache *cache, const knot_dname_t *name, bool is_DS, knot_dname_t **apex)¶

Find the closest cached zone apex for a name (in cache).

Note

timestamp is found by a syscall, and stale-serving is not considered

- Parameters:

is_DS – start searching one name higher

- Returns:

the number of labels to remove from the name, or negative error code

-

int kr_unpack_cache_key(knot_db_val_t key, knot_dname_t *buf, uint16_t *type)¶

Unpack dname and type from db key.

Note

only “exact hits” are considered ATM, moreover xNAME records are “hidden” as NS. (see comments in struct entry_h)

- Parameters:

key – db key representation

buf – output buffer of domain name in dname format

type – output for type

- Returns:

length of dname or an errcode

Variables

-

const char *kr_cache_emergency_file_to_remove¶

Path to cache file to remove on critical out-of-space error.

(do NOT modify it)

-

struct kr_cache¶

- #include <api.h>

Cache structure, keeps API, instance and metadata.

Public Members

-

kr_cdb_pt db¶

Storage instance.

-

const struct kr_cdb_api *api¶

Storage engine.

-

struct kr_cdb_stats stats¶

-

uint32_t ttl_min¶

-

uint32_t ttl_max¶

TTL limits; enforced primarily in iterator actually.

-

struct timeval checkpoint_walltime¶

Wall time on the last check-point.

-

uint64_t checkpoint_monotime¶

Monotonic milliseconds on the last check-point.

-

uv_timer_t *health_timer¶

Timer used for kr_cache_check_health()

-

kr_cdb_pt db¶

-

struct kr_cache_p¶

Header internal for cache implementation(s).

Only LMDB works for now.

Defines

-

KR_CACHE_KEY_MAXLEN¶

LATER(optim.): this is overshot, but struct key usage should be cheap ATM.

-

KR_CACHE_RR_COUNT_SIZE¶

Size of the RR count field.

-

VERBOSE_MSG(qry, ...)¶

-

WITH_VERBOSE(qry)¶

-

cache_op(cache, op, ...)¶

Shorthand for operations on cache backend.

Typedefs

-

typedef uint32_t nsec_p_hash_t¶

Hash of NSEC3 parameters, used as a tag to separate different chains for same zone.

-

typedef knot_db_val_t entry_list_t[EL_LENGTH]¶

Decompressed entry_apex.

It’s an array of unparsed entry_h references. Note: arrays are passed “by reference” to functions (in C99).

Enums

-

enum EL¶

Indices for decompressed entry_list_t.

Values:

-

enumerator EL_NS¶

-

enumerator EL_CNAME¶

-

enumerator EL_DNAME¶

-

enumerator EL_LENGTH¶

-

enumerator EL_NS¶

-

enum [anonymous]¶

Values:

-

enumerator AR_ANSWER¶

Positive answer record.

It might be wildcard-expanded.

-

enumerator AR_SOA¶

SOA record.

-

enumerator AR_NSEC¶

NSEC* covering or matching the SNAME (next closer name in NSEC3 case).

-

enumerator AR_WILD¶

NSEC* covering or matching the source of synthesis.

-

enumerator AR_CPE¶

NSEC3 matching the closest provable encloser.

-

enumerator AR_ANSWER¶

Functions

-

struct entry_h *entry_h_consistent_E(knot_db_val_t data, uint16_t type)¶

Check basic consistency of entry_h for ‘E’ entries, not looking into ->data.

(for is_packet the length of data is checked)

-

struct entry_apex *entry_apex_consistent(knot_db_val_t val)¶

-

static inline struct entry_h *entry_h_consistent_NSEC(knot_db_val_t data)¶

Consistency check, ATM common for NSEC and NSEC3.

-

static inline int nsec_p_rdlen(const uint8_t *rdata)¶

-

static inline nsec_p_hash_t nsec_p_mkHash(const uint8_t *nsec_p)¶

-

knot_db_val_t key_exact_type_maypkt(struct key *k, uint16_t type)¶

Finish constructing string key for for exact search.

It’s assumed that kr_dname_lf(k->buf, owner, *) had been ran.

-

static inline knot_db_val_t key_exact_type(struct key *k, uint16_t type)¶

Like key_exact_type_maypkt but with extra checks if used for RRs only.

-

int entry_h_seek(knot_db_val_t *val, uint16_t type)¶

There may be multiple entries within, so rewind

valto the one we want.ATM there are multiple types only for the NS ktype - it also accommodates xNAMEs.

Note

val->lenrepresents the bound of the whole list, not of a single entry.Note

in case of ENOENT,

valis still rewound to the beginning of the next entry.- Returns:

error code TODO: maybe get rid of this API?

-

int entry_h_splice(knot_db_val_t *val_new_entry, uint8_t rank, const knot_db_val_t key, const uint16_t ktype, const uint16_t type, const knot_dname_t *owner, const struct kr_query *qry, struct kr_cache *cache, uint32_t timestamp)¶

Prepare space to insert an entry.

Some checks are performed (rank, TTL), the current entry in cache is copied with a hole ready for the new entry (old one of the same type is cut out).

- Parameters:

val_new_entry – The only changing parameter; ->len is read, ->data written.

- Returns:

error code

-

int entry_list_parse(const knot_db_val_t val, entry_list_t list)¶

Parse an entry_apex into individual items.

- Returns:

error code.

-

static inline size_t to_even(size_t n)¶

-

static inline int entry_list_serial_size(const entry_list_t list)¶

-

void entry_list_memcpy(struct entry_apex *ea, entry_list_t list)¶

Fill contents of an entry_apex.

Note

NULL pointers are overwritten - caller may like to fill the space later.

-

void stash_pkt(const knot_pkt_t *pkt, const struct kr_query *qry, const struct kr_request *req, bool needs_pkt)¶

Stash the packet into cache (if suitable, etc.)

- Parameters:

needs_pkt – we need the packet due to not stashing some RRs; see stash_rrset() for details It assumes check_dname_for_lf().

-

int answer_from_pkt(kr_layer_t *ctx, knot_pkt_t *pkt, uint16_t type, const struct entry_h *eh, const void *eh_bound, uint32_t new_ttl)¶

Try answering from packet cache, given an entry_h.

This assumes the TTL is OK and entry_h_consistent, but it may still return error. On success it handles all the rest, incl. qry->flags.

-

static inline bool is_expiring(uint32_t orig_ttl, uint32_t new_ttl)¶

Record is expiring if it has less than 1% TTL (or less than 5s)

-

int32_t get_new_ttl(const struct entry_h *entry, const struct kr_query *qry, const knot_dname_t *owner, uint16_t type, uint32_t now)¶

Returns signed result so you can inspect how much stale the RR is.

Note

: NSEC* uses zone name ATM; for NSEC3 the owner may not even be knowable.

- Parameters:

owner – name for stale-serving decisions. You may pass NULL to disable stale.

type – for stale-serving.

-

static inline int rdataset_dematerialize_size(const knot_rdataset_t *rds)¶

Compute size of serialized rdataset.

NULL is accepted as empty set.

-

static inline int rdataset_dematerialized_size(const uint8_t *data, uint16_t *rdataset_count)¶

Analyze the length of a dematerialized rdataset.

Note that in the data it’s KR_CACHE_RR_COUNT_SIZE and then this returned size.

-

void rdataset_dematerialize(const knot_rdataset_t *rds, uint8_t *restrict data)¶

Serialize an rdataset.

It may be NULL as short-hand for empty.

-

int entry2answer(struct answer *ans, int id, const struct entry_h *eh, const uint8_t *eh_bound, const knot_dname_t *owner, uint16_t type, uint32_t new_ttl)¶

Materialize RRset + RRSIGs into ans->rrsets[id].

LATER(optim.): it’s slightly wasteful that we allocate knot_rrset_t for the packet

- Returns:

error code. They are all bad conditions and “guarded” by kresd’s assertions.

-

int pkt_renew(knot_pkt_t *pkt, const knot_dname_t *name, uint16_t type)¶

Prepare answer packet to be filled by RRs (without RR data in wire).

-

int pkt_append(knot_pkt_t *pkt, const struct answer_rrset *rrset, uint8_t rank)¶

Append RRset + its RRSIGs into the current section (shallow copy), with given rank.

Note

it works with empty set as well (skipped)

Note

pkt->wire is not updated in any way

Note

KNOT_CLASS_IN is assumed

Note

Whole RRsets are put into the pseudo-packet; normal parsed packets would only contain single-RR sets.

-

knot_db_val_t key_NSEC1(struct key *k, const knot_dname_t *name, bool add_wildcard)¶

Construct a string key for for NSEC (1) predecessor-search.

Note

k->zlf_len is assumed to have been correctly set

- Parameters:

add_wildcard – Act as if the name was extended by “*.”

-

int nsec1_encloser(struct key *k, struct answer *ans, const int sname_labels, int *clencl_labels, knot_db_val_t *cover_low_kwz, knot_db_val_t *cover_hi_kwz, const struct kr_query *qry, struct kr_cache *cache)¶

Closest encloser check for NSEC (1).

To understand the interface, see the call point.

- Parameters:

k – space to store key + input: zname and zlf_len

- Returns:

0: success; >0: try other (NSEC3); <0: exit cache immediately.

-

int nsec1_src_synth(struct key *k, struct answer *ans, const knot_dname_t *clencl_name, knot_db_val_t cover_low_kwz, knot_db_val_t cover_hi_kwz, const struct kr_query *qry, struct kr_cache *cache)¶

Source of synthesis (SS) check for NSEC (1).

To understand the interface, see the call point.

- Returns:

0: continue; <0: exit cache immediately; AR_SOA: skip to adding SOA (SS was covered or matched for NODATA).

-

knot_db_val_t key_NSEC3(struct key *k, const knot_dname_t *nsec3_name, const nsec_p_hash_t nsec_p_hash)¶

Construct a string key for for NSEC3 predecessor-search, from an NSEC3 name.

Note

k->zlf_len is assumed to have been correctly set

-

int nsec3_encloser(struct key *k, struct answer *ans, const int sname_labels, int *clencl_labels, const struct kr_query *qry, struct kr_cache *cache)¶

TODO.

See nsec1_encloser(…)

-

int nsec3_src_synth(struct key *k, struct answer *ans, const knot_dname_t *clencl_name, const struct kr_query *qry, struct kr_cache *cache)¶

TODO.

See nsec1_src_synth(…)

-

static inline uint16_t get_uint16(const void *address)¶

-

static inline uint8_t *knot_db_val_bound(knot_db_val_t val)¶

Useful pattern, especially as void-pointer arithmetic isn’t standard-compliant.

Variables

-

static const int NSEC_P_MAXLEN = sizeof(uint32_t) + 5 + 255¶

-

static const int NSEC3_HASH_LEN = 20¶

Hash is always SHA1; I see no plans to standardize anything else.

-

static const int NSEC3_HASH_TXT_LEN = 32¶

-

struct entry_h¶

Public Members

-

uint32_t time¶

The time of inception.

-

uint32_t ttl¶

TTL at inception moment.

Assuming it fits into int32_t ATM.

-

uint8_t rank¶

See enum kr_rank.

-

bool is_packet¶

Negative-answer packet for insecure/bogus name.

-

bool has_optout¶

Only for packets; persisted DNSSEC_OPTOUT.

-

uint8_t _pad¶

We need even alignment for data now.

-

uint8_t data[]¶

-

uint32_t time¶

-

struct nsec_p¶

- #include <impl.h>

NSEC* parameters for the chain.

Public Members

-

const uint8_t *raw¶

Pointer to raw NSEC3 parameters; NULL for NSEC.

-

nsec_p_hash_t hash¶

Hash of

raw, used for cache keys.

-

dnssec_nsec3_params_t libknot¶

Format for libknot; owns malloced memory!

-

const uint8_t *raw¶

-

struct key¶

Public Members

-

const knot_dname_t *zname¶

current zone name (points within qry->sname)

-

uint8_t zlf_len¶

length of current zone’s lookup format

-

uint16_t type¶

Corresponding key type; e.g.

NS for CNAME. Note: NSEC type is ambiguous (exact and range key).

-

uint8_t buf[KR_CACHE_KEY_MAXLEN]¶

The key data start at buf+1, and buf[0] contains some length.

For details see key_exact* and key_NSEC* functions.

-

const knot_dname_t *zname¶

-

struct entry_apex¶

- #include <impl.h>

Header of ‘E’ entry with ktype == NS.

Inside is private to ./entry_list.c

We store xNAME at NS type to lower the number of searches in closest_NS(). CNAME is only considered for equal name, of course. We also store NSEC* parameters at NS type.

Public Members

-

bool has_ns¶

-

bool has_cname¶

-

bool has_dname¶

-

uint8_t pad_¶

1 byte + 2 bytes + x bytes would be weird; let’s do 2+2+x.

-

int8_t nsecs[ENTRY_APEX_NSECS_CNT]¶

We have two slots for NSEC* parameters.

This array describes how they’re filled; values: 0: none, 1: NSEC, 3: NSEC3.

Two slots are a compromise to smoothly handle normal rollovers (either changing NSEC3 parameters or between NSEC and NSEC3).

-

uint8_t data[]¶

-

bool has_ns¶

-

struct answer¶

- #include <impl.h>

Partially constructed answer when gathering RRsets from cache.

Public Members

-

int rcode¶

PKT_NODATA, etc.

-

knot_mm_t *mm¶

Allocator for rrsets.

-

struct answer.answer_rrset rrsets[1 + 1 + 3]¶

see AR_ANSWER and friends; only required records are filled

-

struct answer_rrset¶

-

int rcode¶

Nameservers¶

Provides server selection API (see kr_server_selection) and functions common to both implementations.

Enums

-

enum kr_selection_error¶

These errors are to be reported as feedback to server selection.

See

kr_server_selection::errorfor more details.Values:

-

enumerator KR_SELECTION_OK¶

-

enumerator KR_SELECTION_QUERY_TIMEOUT¶

-

enumerator KR_SELECTION_TLS_HANDSHAKE_FAILED¶

-

enumerator KR_SELECTION_TCP_CONNECT_FAILED¶

-

enumerator KR_SELECTION_TCP_CONNECT_TIMEOUT¶

-

enumerator KR_SELECTION_REFUSED¶

-

enumerator KR_SELECTION_SERVFAIL¶

-

enumerator KR_SELECTION_FORMERR¶

-

enumerator KR_SELECTION_FORMERR_EDNS¶

inside an answer without an OPT record

-

enumerator KR_SELECTION_NOTIMPL¶

with an OPT record

-

enumerator KR_SELECTION_OTHER_RCODE¶

-

enumerator KR_SELECTION_MALFORMED¶

-

enumerator KR_SELECTION_MISMATCHED¶

Name or type mismatch.

-

enumerator KR_SELECTION_TRUNCATED¶

-

enumerator KR_SELECTION_DNSSEC_ERROR¶

-

enumerator KR_SELECTION_LAME_DELEGATION¶

-

enumerator KR_SELECTION_BAD_CNAME¶

Too long chain, or a cycle.

-

enumerator KR_SELECTION_NUMBER_OF_ERRORS¶

Leave this last, as it is used as array size.

-

enumerator KR_SELECTION_OK¶

-

enum kr_transport_protocol¶

Values:

-

enumerator KR_TRANSPORT_RESOLVE_A¶

Selected name with no IPv4 address, it has to be resolved first.

-

enumerator KR_TRANSPORT_RESOLVE_AAAA¶

Selected name with no IPv6 address, it has to be resolved first.

-

enumerator KR_TRANSPORT_UDP¶

-

enumerator KR_TRANSPORT_TCP¶

-

enumerator KR_TRANSPORT_TLS¶

-

enumerator KR_TRANSPORT_RESOLVE_A¶

Functions

-

void kr_server_selection_init(struct kr_query *qry)¶

Initialize the server selection API for

qry.The implementation is to be chosen based on qry->flags.

-

int kr_forward_add_target(struct kr_request *req, const struct sockaddr *sock)¶

Add forwarding target to request.

This is exposed to Lua in order to add forwarding targets to request. These are then shared by all the queries in said request.

-

struct kr_transport *select_transport(const struct choice choices[], int choices_len, const struct to_resolve unresolved[], int unresolved_len, int timeouts, struct knot_mm *mempool, bool tcp, size_t *choice_index)¶

Based on passed choices, choose the next transport.

Common function to both implementations (iteration and forwarding). The

*_choose_transportfunctions fromselection_*.hpreprocess the input for this one.- Parameters:

choices – Options to choose from, see struct above

unresolved – Array of names that can be resolved (i.e. no A/AAAA record)

timeouts – Number of timeouts that occurred in this query (used for exponential backoff)

mempool – Memory context of current request

tcp – Force TCP as transport protocol

choice_index – [out] Optionally index of the chosen transport in the

choicesarray.

- Returns:

Chosen transport (on mempool) or NULL when no choice is viable

-

void update_rtt(struct kr_query *qry, struct address_state *addr_state, const struct kr_transport *transport, unsigned rtt)¶

Common part of RTT feedback mechanism.

Notes RTT to global cache.

-

void error(struct kr_query *qry, struct address_state *addr_state, const struct kr_transport *transport, enum kr_selection_error sel_error)¶

Common part of error feedback mechanism.

-

struct rtt_state get_rtt_state(const uint8_t *ip, size_t len, struct kr_cache *cache)¶

Get RTT state from cache.

Returns

default_rtt_stateon unknown addresses.Note that this opens a cache transaction which is usually closed by calling

put_rtt_state, i.e. callee is responsible for its closing (e.g. calling kr_cache_commit).

-

void bytes_to_ip(uint8_t *bytes, size_t len, uint16_t port, union kr_sockaddr *dst)¶

-

uint8_t *ip_to_bytes(const union kr_sockaddr *src, size_t len)¶

-

void update_address_state(struct address_state *state, union kr_sockaddr *address, size_t address_len, struct kr_query *qry)¶

-

bool no6_is_bad(void)¶

-

struct kr_transport¶

- #include <selection.h>

Output of the selection algorithm.

Public Members

-

knot_dname_t *ns_name¶

Set to “.” for forwarding targets.

-

union kr_sockaddr address¶

-

size_t address_len¶

-

enum kr_transport_protocol protocol¶

-

unsigned timeout¶

Timeout in ms to be set for UDP transmission.

-

bool timeout_capped¶

Timeout was capped to a maximum value based on the other candidates when choosing this transport.

The timeout therefore can be much lower than what we expect it to be. We basically probe the server for a sudden network change but we expect it to timeout in most cases. We have to keep this in mind when noting the timeout in cache.

-

bool deduplicated¶

True iff transport was set in worker.c:subreq_finalize, that means it may be different from the one originally chosen one.

-

knot_dname_t *ns_name¶

-

struct local_state¶

Public Members

-

int timeouts¶

Number of timeouts that occurred resolving this query.

-

bool truncated¶

Query was truncated, switch to TCP.

-

bool force_resolve¶

Force resolution of a new NS name (if possible) Done by selection.c:error in some cases.

-

bool force_udp¶

Used to work around auths with broken TCP.

-

void *private¶

Inner state of the implementation.

-

int timeouts¶

-

struct kr_server_selection¶

- #include <selection.h>

Specifies a API for selecting transports and giving feedback on the choices.

The function pointers are to be used throughout resolver when some information about the transport is obtained. E.g. RTT in

worker.cor RCODE initerate.c,…Public Members

-

bool initialized¶

-

void (*choose_transport)(struct kr_query *qry, struct kr_transport **transport)¶

Puts a pointer to next transport of

qrytotransport.Allocates new kr_transport in request’s mempool, chooses transport to be used for this query. Selection may fail, so

transportcan be set to NULL.- Param transport:

to be filled with pointer to the chosen transport or NULL on failure

-

void (*update_rtt)(struct kr_query *qry, const struct kr_transport *transport, unsigned rtt)¶

Report back the RTT of network operation for transport in ms.

-

void (*error)(struct kr_query *qry, const struct kr_transport *transport, enum kr_selection_error error)¶

Report back error encountered with the chosen transport.

See

enum kr_selection

-

struct local_state *local_state¶

-

bool initialized¶

-

struct rtt_state¶

- #include <selection.h>

To be held per IP address in the global LMDB cache.

Public Members

-

int32_t srtt¶

Smoothed RTT, i.e.

an estimate of round-trip time.

-

int32_t variance¶

An estimate of RTT’s standard derivation (not variance).

-

int32_t consecutive_timeouts¶

Note: some TCP and TLS failures are also considered as timeouts.

-

uint64_t dead_since¶

Timestamp of pronouncing this IP bad based on KR_NS_TIMEOUT_ROW_DEAD.

-

int32_t srtt¶

-

struct address_state¶

- #include <selection.h>

To be held per IP address and locally “inside” query.

-

struct choice¶

- #include <selection.h>

Array of these is one of inputs for the actual selection algorithm (

select_transport)Public Members

-

union kr_sockaddr address¶

-

size_t address_len¶

-

struct address_state *address_state¶

-

uint16_t port¶

used to overwrite the port number; if zero,

select_transportdetermines it.

-

union kr_sockaddr address¶

-

struct to_resolve¶

- #include <selection.h>

Array of these is description of names to be resolved (i.e.

name without some address)

Public Members

-

knot_dname_t *name¶

-

enum kr_transport_protocol type¶

Either KR_TRANSPORT_RESOLVE_A or KR_TRANSPORT_RESOLVE_AAAA is valid here.

-

knot_dname_t *name¶

Functions

-

int kr_zonecut_init(struct kr_zonecut *cut, const knot_dname_t *name, knot_mm_t *pool)¶

Populate root zone cut with SBELT.

- Parameters:

cut – zone cut

name –

pool –

- Returns:

0 or error code

-

void kr_zonecut_deinit(struct kr_zonecut *cut)¶

Clear the structure and free the address set.

- Parameters:

cut – zone cut

-

void kr_zonecut_move(struct kr_zonecut *to, const struct kr_zonecut *from)¶

Move a zonecut, transferring ownership of any pointed-to memory.

- Parameters:

to – the target - it gets deinit-ed

from – the source - not modified, but shouldn’t be used afterward

-

void kr_zonecut_set(struct kr_zonecut *cut, const knot_dname_t *name)¶

Reset zone cut to given name and clear address list.

Note

This clears the address list even if the name doesn’t change. TA and DNSKEY don’t change.

- Parameters:

cut – zone cut to be set

name – new zone cut name

-

int kr_zonecut_copy(struct kr_zonecut *dst, const struct kr_zonecut *src)¶

Copy zone cut, including all data.

Does not copy keys and trust anchor.

Note

addresses for names in

srcget replaced and others are left as they were.- Parameters:

dst – destination zone cut

src – source zone cut

- Returns:

0 or an error code; If it fails with kr_error(ENOMEM), it may be in a half-filled state, but it’s safe to deinit…

-

int kr_zonecut_copy_trust(struct kr_zonecut *dst, const struct kr_zonecut *src)¶

Copy zone trust anchor and keys.

- Parameters:

dst – destination zone cut

src – source zone cut

- Returns:

0 or an error code

-

int kr_zonecut_add(struct kr_zonecut *cut, const knot_dname_t *ns, const void *data, int len)¶

Add address record to the zone cut.

The record will be merged with existing data, it may be either A/AAAA type.

- Parameters:

cut – zone cut to be populated

ns – nameserver name

data – typically knot_rdata_t::data

len – typically knot_rdata_t::len

- Returns:

0 or error code

-

int kr_zonecut_del(struct kr_zonecut *cut, const knot_dname_t *ns, const void *data, int len)¶

Delete nameserver/address pair from the zone cut.

- Parameters:

cut –

ns – name server name

data – typically knot_rdata_t::data

len – typically knot_rdata_t::len

- Returns:

0 or error code

-

int kr_zonecut_del_all(struct kr_zonecut *cut, const knot_dname_t *ns)¶

Delete all addresses associated with the given name.

- Parameters:

cut –

ns – name server name

- Returns:

0 or error code

-

pack_t *kr_zonecut_find(struct kr_zonecut *cut, const knot_dname_t *ns)¶

Find nameserver address list in the zone cut.

Note

This can be used for membership test, a non-null pack is returned if the nameserver name exists.

- Parameters:

cut –

ns – name server name

- Returns:

pack of addresses or NULL

-

int kr_zonecut_set_sbelt(struct kr_context *ctx, struct kr_zonecut *cut)¶

Populate zone cut with a root zone using SBELT :rfc:

1034- Parameters:

ctx – resolution context (to fetch root hints)

cut – zone cut to be populated

- Returns:

0 or error code

-

int kr_zonecut_find_cached(struct kr_context *ctx, struct kr_zonecut *cut, const knot_dname_t *name, const struct kr_query *qry, bool *restrict secured)¶

Populate zone cut address set from cache.

The size is limited to avoid possibility of doing too much CPU work.

- Parameters:

ctx – resolution context (to fetch data from LRU caches)

cut – zone cut to be populated

name – QNAME to start finding zone cut for

qry – query for timestamp and stale-serving decisions

secured – set to true if want secured zone cut, will return false if it is provably insecure

- Returns:

0 or error code (ENOENT if it doesn’t find anything)

-

bool kr_zonecut_is_empty(struct kr_zonecut *cut)¶

Check if any address is present in the zone cut.

- Parameters:

cut – zone cut to check

- Returns:

true/false

-

struct kr_zonecut¶

- #include <zonecut.h>

Current zone cut representation.

Modules¶

Module API definition and functions for (un)loading modules.

Defines

-

KR_MODULE_EXPORT(module)¶

Export module API version (place this at the end of your module).

- Parameters:

module – module name (e.g. policy)

-

KR_MODULE_API¶

Functions

-

int kr_module_load(struct kr_module *module, const char *name, const char *path)¶

Load a C module instance into memory.

And call its init().

- Parameters:

module – module structure. Will be overwritten except for ->data on success.

name – module name

path – module search path

- Returns:

0 or an error

-

void kr_module_unload(struct kr_module *module)¶

Unload module instance.

Note

currently used even for lua modules

- Parameters:

module – module structure

-

kr_module_init_cb kr_module_get_embedded(const char *name)¶

Get embedded module’s init function by name (or NULL).

-

struct kr_module¶

- #include <module.h>

Module representation.

The five symbols (init, …) may be defined by the module as name_init(), etc; all are optional and missing symbols are represented as NULLs;

Public Members

-

char *name¶

-

int (*init)(struct kr_module *self)¶

Constructor.

Called after loading the module.

- Return:

error code. Lua modules: not populated, called via lua directly.

-

int (*deinit)(struct kr_module *self)¶

Destructor.

Called before unloading the module.

- Return:

error code.

-

int (*config)(struct kr_module *self, const char *input)¶

Configure with encoded JSON (NULL if missing).

- Return:

error code. Lua modules: not used and not useful from C. When called from lua, input is JSON, like for kr_prop_cb.

-

const kr_layer_api_t *layer¶

Packet processing API specs.

May be NULL. See docs on that type. Owned by the module code.

-

const struct kr_prop *props¶

List of properties.

May be NULL. Terminated by { NULL, NULL, NULL }. Lua modules: not used and not useful.

-

void *lib¶

dlopen() handle; RTLD_DEFAULT for embedded modules; NULL for lua modules.

-

void *data¶

Custom data context.

-

char *name¶

-

struct kr_prop¶

- #include <module.h>

Module property (named callable).

Typedefs

-

typedef struct kr_layer_api kr_layer_api_t¶

Enums

-

enum kr_layer_state¶

Layer processing states.

Only one value at a time (but see TODO).

Each state represents the state machine transition, and determines readiness for the next action. See struct kr_layer_api for the actions.

TODO: the cookie module sometimes sets (_FAIL | _DONE) on purpose (!)

Values:

-

enumerator KR_STATE_CONSUME¶

Consume data.

-

enumerator KR_STATE_PRODUCE¶

Produce data.

-

enumerator KR_STATE_DONE¶

Finished successfully or a special case: in CONSUME phase this can be used (by iterator) to do a transition to PRODUCE phase again, in which case the packet wasn’t accepted for some reason.

-

enumerator KR_STATE_FAIL¶

Error.

-

enumerator KR_STATE_YIELD¶

Paused, waiting for a sub-query.

-

enumerator KR_STATE_CONSUME¶

Functions

-

static inline bool kr_state_consistent(enum kr_layer_state s)¶

Check that a kr_layer_state makes sense.

We’re not very strict ATM.

-

struct kr_layer¶

- #include <layer.h>

Packet processing context.

Public Members

-

int state¶

The current state; bitmap of enum kr_layer_state.

-

struct kr_request *req¶

The corresponding request.

-

const struct kr_layer_api *api¶

-

knot_pkt_t *pkt¶

In glue for lua kr_layer_api it’s used to pass the parameter.

-

struct sockaddr *dst¶

In glue for checkout layer it’s used to pass the parameter.

-

bool is_stream¶

In glue for checkout layer it’s used to pass the parameter.

-

int state¶

-

struct kr_layer_api¶

- #include <layer.h>

Packet processing module API.

All functions return the new kr_layer_state.

Lua modules are allowed to return nil/nothing, meaning the state shall not change.

Public Members

-

int (*begin)(kr_layer_t *ctx)¶

Start of processing the DNS request.

-

int (*reset)(kr_layer_t *ctx)¶

-

int (*finish)(kr_layer_t *ctx)¶

Paired to begin, called both on successes and failures.

-

int (*consume)(kr_layer_t *ctx, knot_pkt_t *pkt)¶

Process an answer from upstream or from cache.

Lua API: call is omitted iff (state & KR_STATE_FAIL).

-

int (*produce)(kr_layer_t *ctx, knot_pkt_t *pkt)¶

Produce either an answer to the request or a query for upstream (or fail).

Lua API: call is omitted iff (state & KR_STATE_FAIL).

-

int (*checkout)(kr_layer_t *ctx, knot_pkt_t *packet, struct sockaddr *dst, int type)¶

Finalises the outbound query packet with the knowledge of the IP addresses.

The checkout layer doesn’t persist the state, so canceled subrequests don’t affect the resolution or rest of the processing. Lua API: call is omitted iff (state & KR_STATE_FAIL).

-

int (*answer_finalize)(kr_layer_t *ctx)¶

Finalises the answer.

Last chance to affect what will get into the answer, including EDNS. Not called if the packet is being dropped.

-

void *data¶

The C module can store anything in here.

-

int cb_slots[]¶

Internal to .

/daemon/ffimodule.c.

-

int (*begin)(kr_layer_t *ctx)¶

-

struct kr_layer_pickle¶

- #include <layer.h>

Pickled layer state (api, input, state).

Public Members

-

struct kr_layer_pickle *next¶

-

const struct kr_layer_api *api¶

-

knot_pkt_t *pkt¶

-

unsigned state¶

-

struct kr_layer_pickle *next¶

Utilities¶

Defines

-

KR_STRADDR_MAXLEN¶

Maximum length (excluding null-terminator) of a presentation-form address returned by

kr_straddr.

-

kr_require(expression)¶

Assert() but always, regardless of -DNDEBUG.

See also kr_assert().

-

kr_fails_assert(expression)¶

Check an assertion that’s recoverable.

Return the true if it fails and needs handling.

If the check fails, optionally fork()+abort() to generate coredump and continue running in parent process. Return value must be handled to ensure safe recovery from error. Use kr_require() for unrecoverable checks. The errno variable is not mangled, e.g. you can: if (kr_fails_assert(…)) return errno;

-

kr_assert(expression)¶

Kresd assertion without a return value.

These can be turned on or off, for mandatory unrecoverable checks, use kr_require(). For recoverable checks, use kr_fails_assert().

-

KR_DNAME_GET_STR(dname_str, dname)¶

-

KR_RRTYPE_GET_STR(rrtype_str, rrtype)¶

-

KR_RRKEY_LEN¶

-

SWAP(x, y)¶

Swap two places.

Note: the parameters need to be without side effects.

Typedefs

-

typedef void (*trace_callback_f)(struct kr_request *request)¶

Callback for request events.

-

typedef void (*trace_log_f)(const struct kr_request *request, const char *msg)¶

Callback for request logging handler.

- Param msg:

[in] Log message. Pointer is not valid after handler returns.

-

typedef struct kr_http_header_array_entry kr_http_header_array_entry_t¶

-

typedef see_source_code kr_http_header_array_t¶

Array of HTTP headers for DoH.

-

typedef struct timespec kr_timer_t¶

Timer, i.e stop-watch.

Functions

-

void kr_fail(bool is_fatal, const char *expr, const char *func, const char *file, int line)¶

Use kr_require(), kr_assert() or kr_fails_assert() instead of directly this function.

-

static inline bool kr_assert_func(bool result, const char *expr, const char *func, const char *file, int line)¶

Use kr_require(), kr_assert() or kr_fails_assert() instead of directly this function.

-

static inline int strcmp_p(const void *p1, const void *p2)¶

A strcmp() variant directly usable for qsort() on an array of strings.

-

static inline void get_workdir(char *out, size_t len)¶

Get current working directory with fallback value.

-

char *kr_strcatdup(unsigned n, ...)¶

Concatenate N strings.

-

char *kr_absolutize_path(const char *dirname, const char *fname)¶

Construct absolute file path, without resolving symlinks.

- Returns:

malloc-ed string or NULL (+errno in that case)

-

void kr_rnd_buffered(void *data, unsigned int size)¶

You probably want kr_rand_* convenience functions instead.

This is a buffered version of gnutls_rnd(GNUTLS_RND_NONCE, ..)

-

inline uint64_t kr_rand_bytes(unsigned int size)¶

Return a few random bytes.

-

static inline bool kr_rand_coin(unsigned int nomin, unsigned int denomin)¶

Throw a pseudo-random coin, succeeding approximately with probability nomin/denomin.

low precision, only one byte of randomness (or none with extreme parameters)

tip: use !kr_rand_coin() to get the complementary probability

-

int kr_memreserve(void *baton, void **mem, size_t elm_size, size_t want, size_t *have)¶

Memory reservation routine for knot_mm_t.

-

int kr_pkt_recycle(knot_pkt_t *pkt)¶

-

int kr_pkt_clear_payload(knot_pkt_t *pkt)¶

-

int kr_pkt_put(knot_pkt_t *pkt, const knot_dname_t *name, uint32_t ttl, uint16_t rclass, uint16_t rtype, const uint8_t *rdata, uint16_t rdlen)¶

Construct and put record to packet.

-

void kr_pkt_make_auth_header(knot_pkt_t *pkt)¶

Set packet header suitable for authoritative answer.

(for policy module)

-

static inline knot_dname_t *kr_pkt_qname_raw(const knot_pkt_t *pkt)¶

Get pointer to the in-header QNAME.

That’s normally not lower-cased. However, when receiving packets from upstream we xor-apply the secret during packet-parsing, so it would get lower-cased after that point if the case was right.

-

const char *kr_inaddr(const struct sockaddr *addr)¶

Address bytes for given family.

-

int kr_inaddr_family(const struct sockaddr *addr)¶

Address family.

-

int kr_inaddr_len(const struct sockaddr *addr)¶

Address length for given family, i.e.

sizeof(struct in*_addr).

-

int kr_sockaddr_len(const struct sockaddr *addr)¶

Sockaddr length for given family, i.e.

sizeof(struct sockaddr_in*).

-

ssize_t kr_sockaddr_key(struct kr_sockaddr_key_storage *dst, const struct sockaddr *addr)¶

Creates a packed structure from the specified

addr, safe for use as a key in containers liketrie_t, and writes it intodst.On success, returns the actual length of the key.

Returns

kr_error(EAFNOSUPPORT)if the family ofaddris unsupported.

-

struct sockaddr *kr_sockaddr_from_key(struct sockaddr_storage *dst, const char *key)¶

Creates a

struct sockaddrfrom the specifiedkeycreated using thekr_sockaddr_key()function.

-

bool kr_sockaddr_key_same_addr(const char *key_a, const char *key_b)¶

Checks whether the two keys represent the same address; does NOT compare the ports.

-

int kr_sockaddr_cmp(const struct sockaddr *left, const struct sockaddr *right)¶

Compare two given sockaddr.

return 0 - addresses are equal, error code otherwise.

-

uint16_t kr_inaddr_port(const struct sockaddr *addr)¶

Port.

-

void kr_inaddr_set_port(struct sockaddr *addr, uint16_t port)¶

Set port.

-

int kr_inaddr_str(const struct sockaddr *addr, char *buf, size_t *buflen)¶

Write string representation for given address as “<addr>#<port>”.

- Parameters:

addr – [in] the raw address

buf – [out] the buffer for output string

buflen – [inout] the available(in) and utilized(out) length, including \0

-

int kr_ntop_str(int family, const void *src, uint16_t port, char *buf, size_t *buflen)¶

Write string representation for given address as “<addr>#<port>”.

It’s the same as kr_inaddr_str(), but the input address is input in native format like for inet_ntop() (4 or 16 bytes) and port must be separate parameter.

-

char *kr_straddr(const struct sockaddr *addr)¶

-

int kr_straddr_family(const char *addr)¶

Return address type for string.

-

int kr_family_len(int family)¶

Return address length in given family (struct in*_addr).

-

struct sockaddr *kr_straddr_socket(const char *addr, int port, knot_mm_t *pool)¶